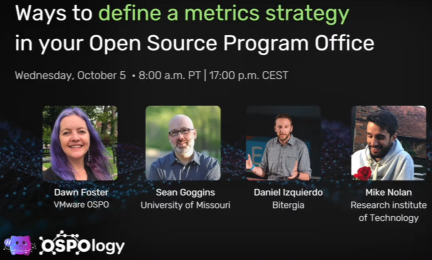

OSPOlogy recently hosted a discussion about the role of metrics in public administrations' efforts to migrate to free software / open source. Metrics serve two purposes: (1) to allow planners and managers to evaluate their own work (2) to show to implementers and higher-ups the project's performance. Every change creates potential for resistance, so it’s important to show that work is worthwhile, and this can be done by showing metrics directly or they can back up a pitch or a narrative.

The discussion was with developers and users of the Chaoss metrics system but the observations should be of general interest.

Participants noted one big evolution. Open Source Programme Offices (OSPOs) started out with a focus on the legal and compliance side of using or developing open source. That work is still there, but they're now growing to include strategy.

One challenge, the gaming of metrics, is that when someone says a metric is important and contributors begin to focus on improving that metric, which might not be the same as what the project needs. In this sense metrics are a marker, just as the number of ashtrays in a person's house is a fairly reliable marker for lung health, but you can't change anyone's lung health by changing the number of ashtrays in their house. One participant gave the example of counting commits, which leads to developers splitting their work into smaller pieces.

It was highlighted that this problem is particularly likely if metrics are applied to individuals. This should maybe be avoided and instead focus on the health of the ecosystem.

A second challenge is that metrics have to be detailed to be useful. The old metrics, mostly based on counting, we quite susceptible to gaming. But the more detailed they get the less accessible or less interesting they are. So increased use of metrics software has to be paired with internal expertise on how to interpret the metrics and how to translate them into something useful for others. For Chaoss, the related Auger tool was highlighted.

In discussing the decisions that metrics can support, the participants put together a set of evaluation questions that could be useful for many projects, with or without metrics:

Organisation planning

- Are open source strategies aligned across business units?

Execution

- Is the project working with the broader developer community? How can this be measured?

- What are the benefits of working in the open? Is this project getting those benefits?

Project health

- How can we evaluate where a project needs help?

- What are the dependencies in internal toolchains? Are there risks, can they be mitigated?

- Sustainability over time:

- How many maintainers are there? (the "bus factor", pp 42-47)

- Are maintainers burnt-out?

- Is there a good rate or pipeline of new contributors who could become maintainers?

- How can we identify new and up-and-coming people, who could become maintainers, so they can be encouraged or mentored?

- How do you keep the essential people from leaving?

Financial

- What is the value of the project or its components? (for a purchase, a contract, or even a merger or acquisition)

For the future, it was suggested metrics might be able to give indications for how long it takes to get a new feature in, or how long to fix a bug.

It was also mentioned that the Chaoss project is looking for input for further metrics they should investigate. This can be done by their "Value" group, which will soon be renamed to "OSPO Working Group", which you can find on their Participate page.

If you have further information about this project, or of similar projects, please leave a comment below. All comments are read by an OSOR facilitator.